Part A.1: Shoot the Pictures

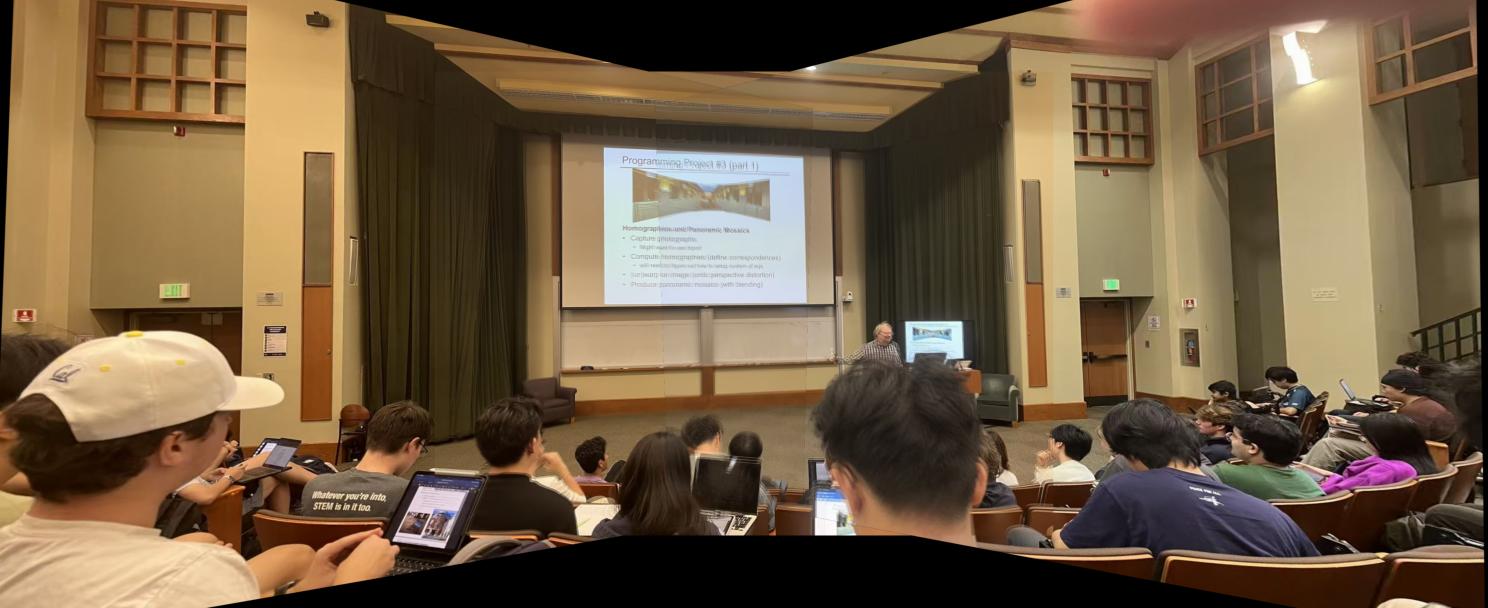

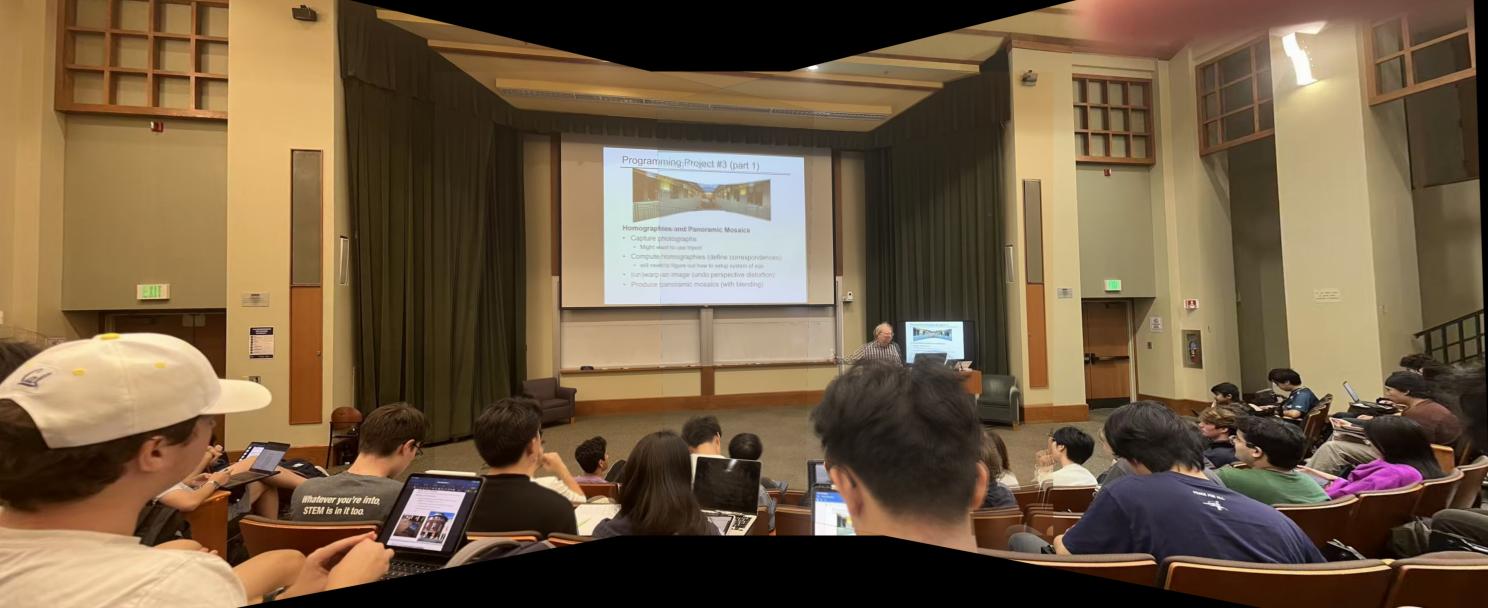

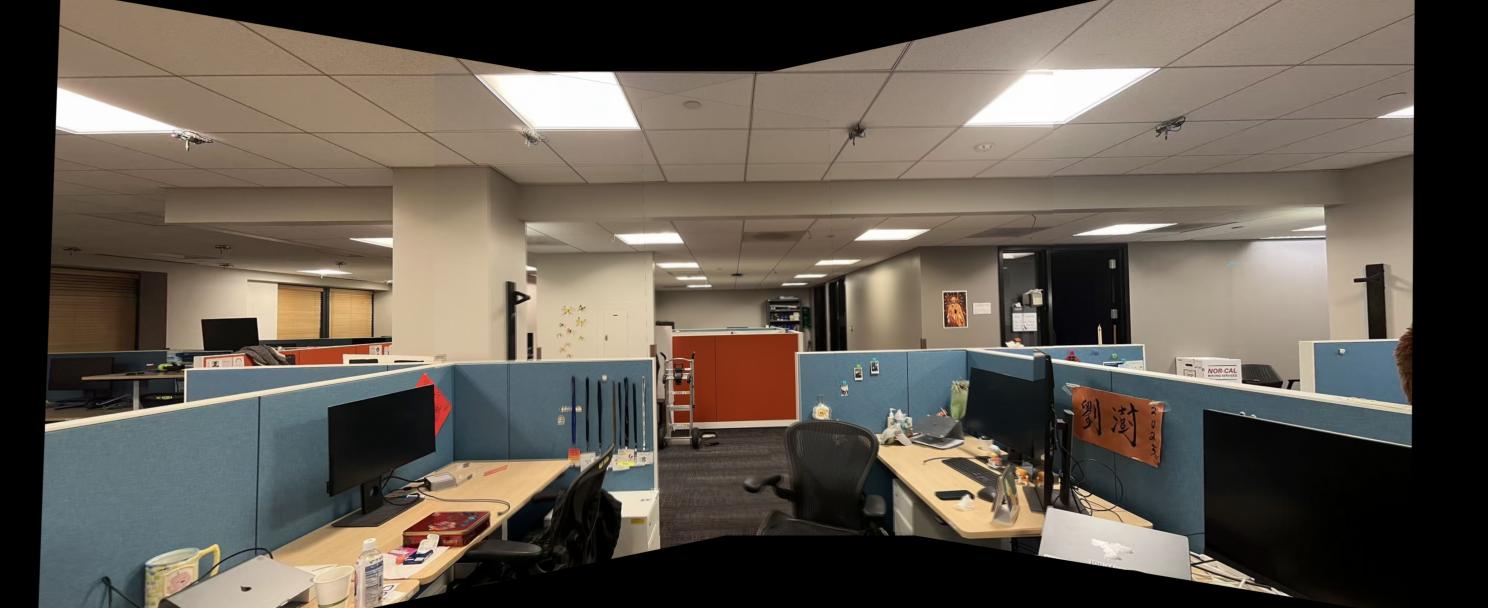

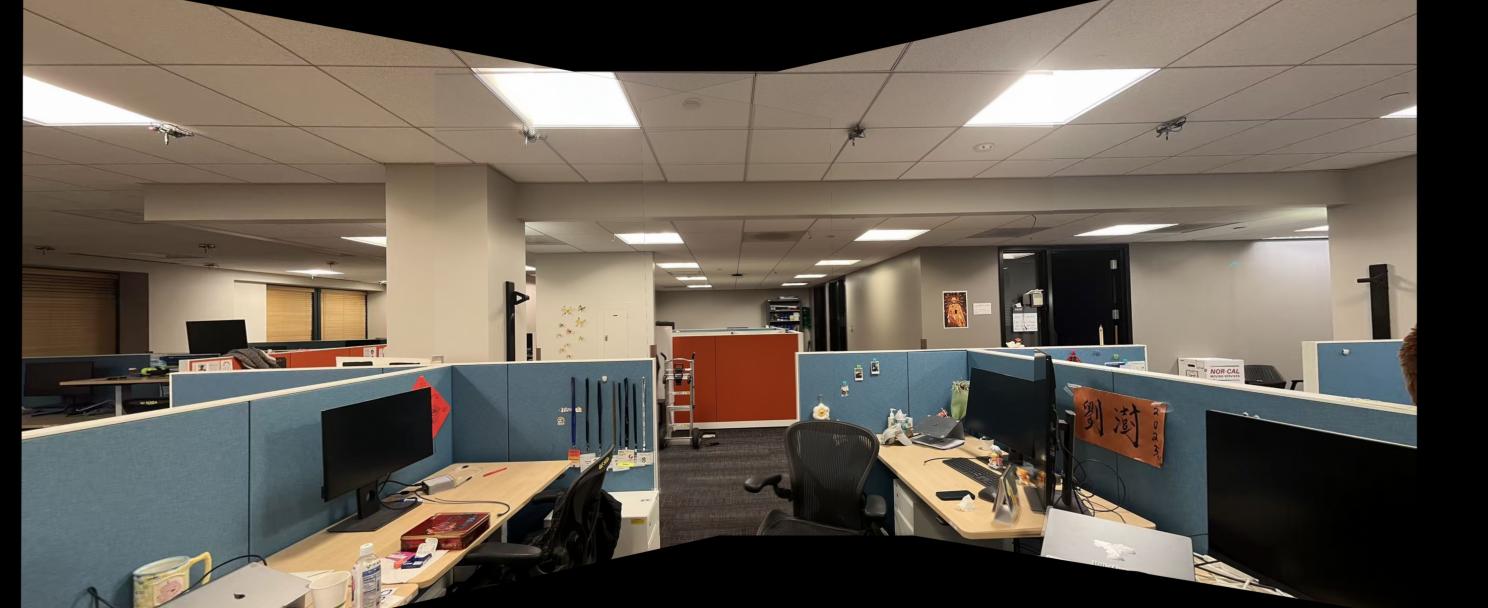

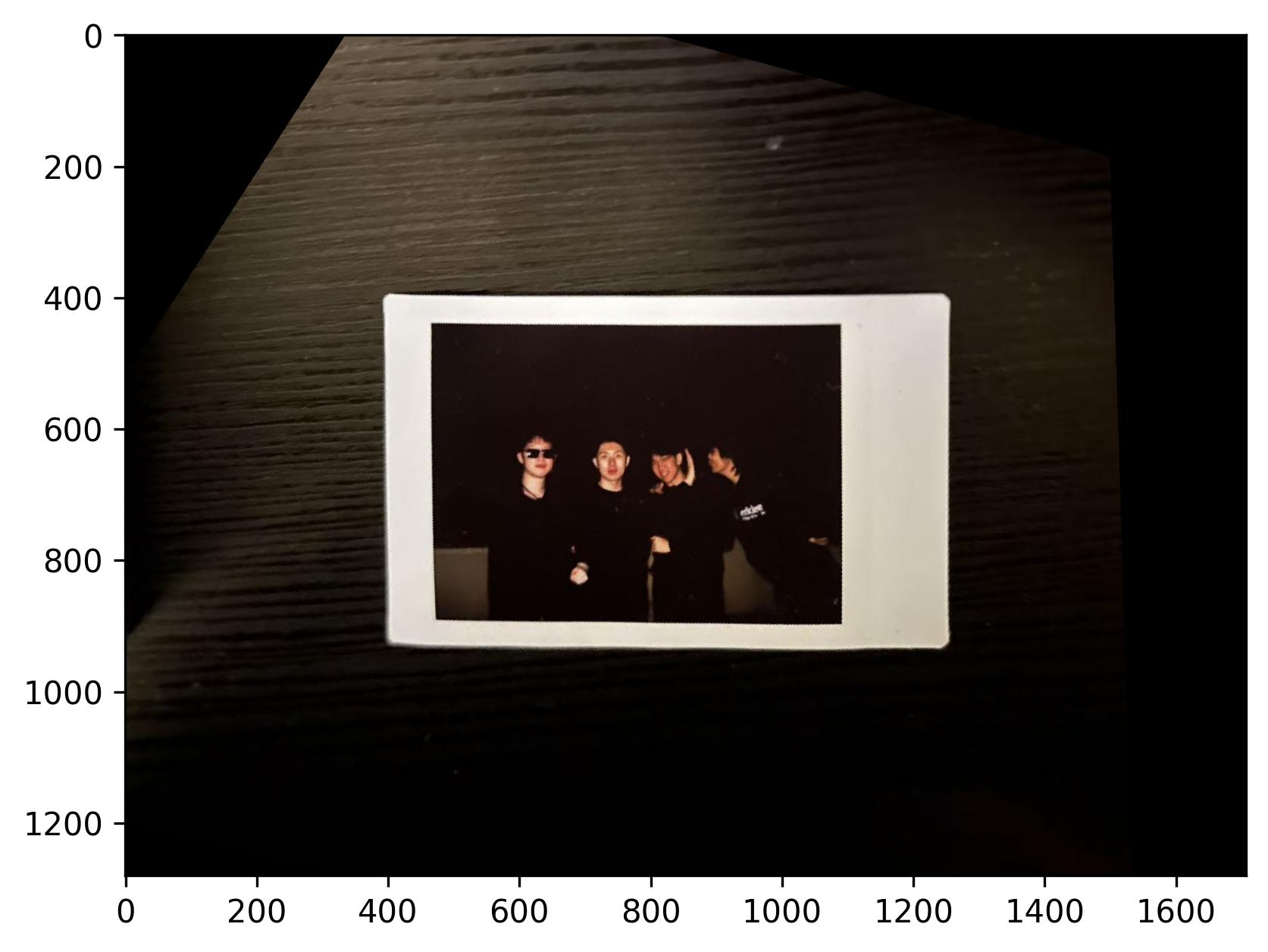

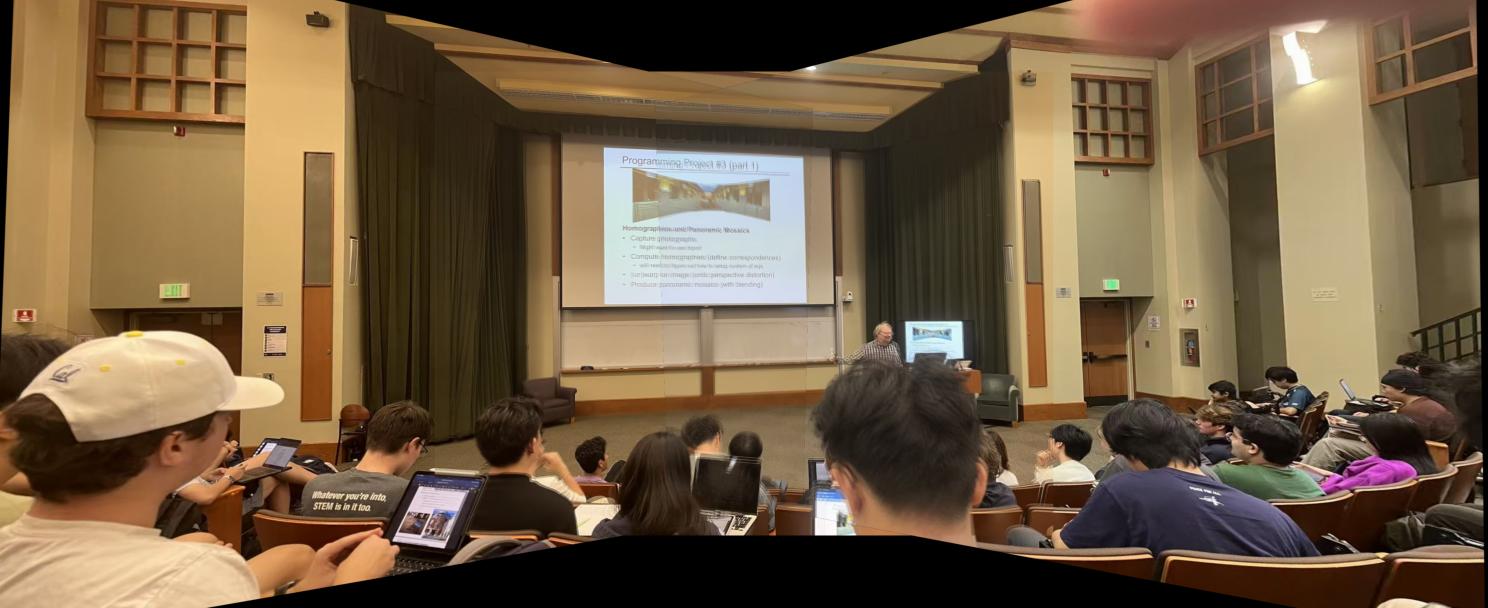

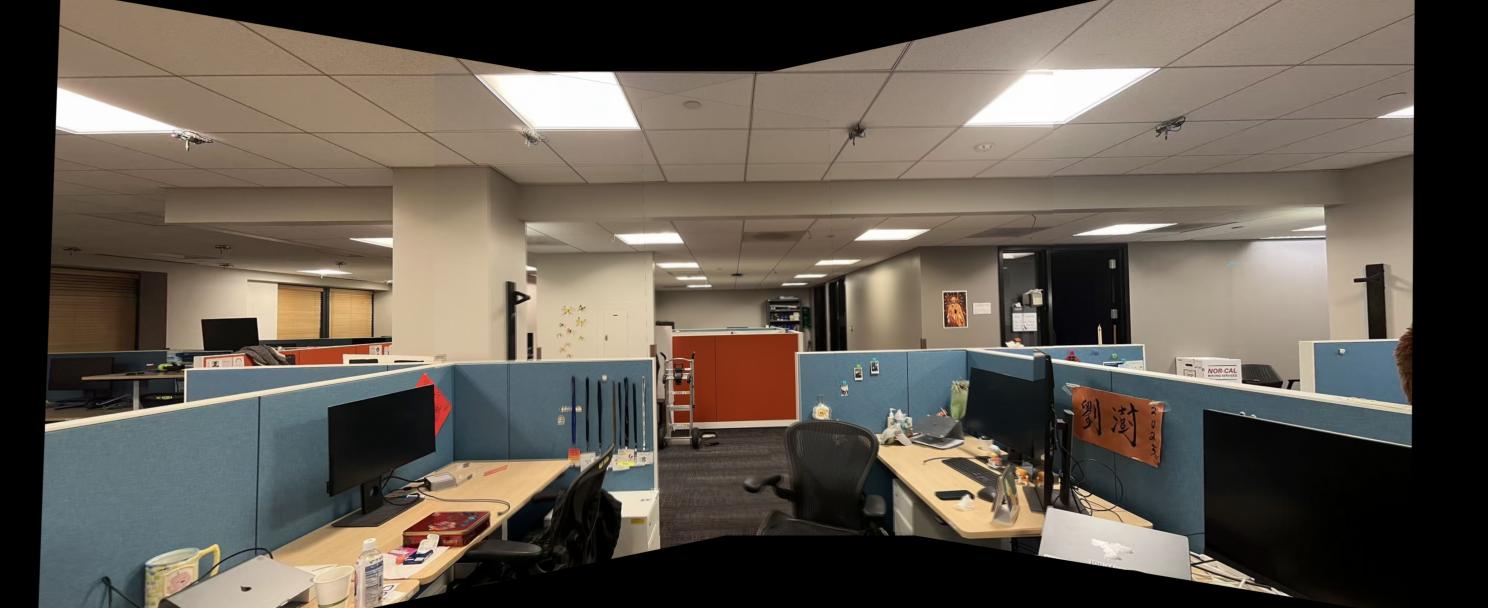

This part includes sets of pictures shot with projective transformations, ie. rotating the camera while keeping the COP (center of projection) the same. Later part of the project will be done with these pictures.

This project implements the automatic stitching of photo mosaics. For part A, the stitching is done by manually picking correspondence points between the images and using a homography matrix to project the images on the same plane.

This part includes sets of pictures shot with projective transformations, ie. rotating the camera while keeping the COP (center of projection) the same. Later part of the project will be done with these pictures.

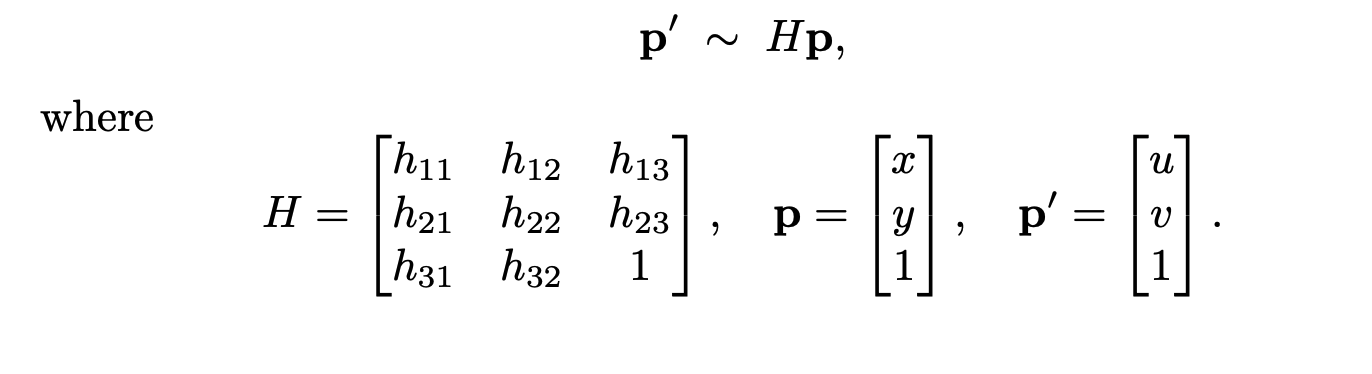

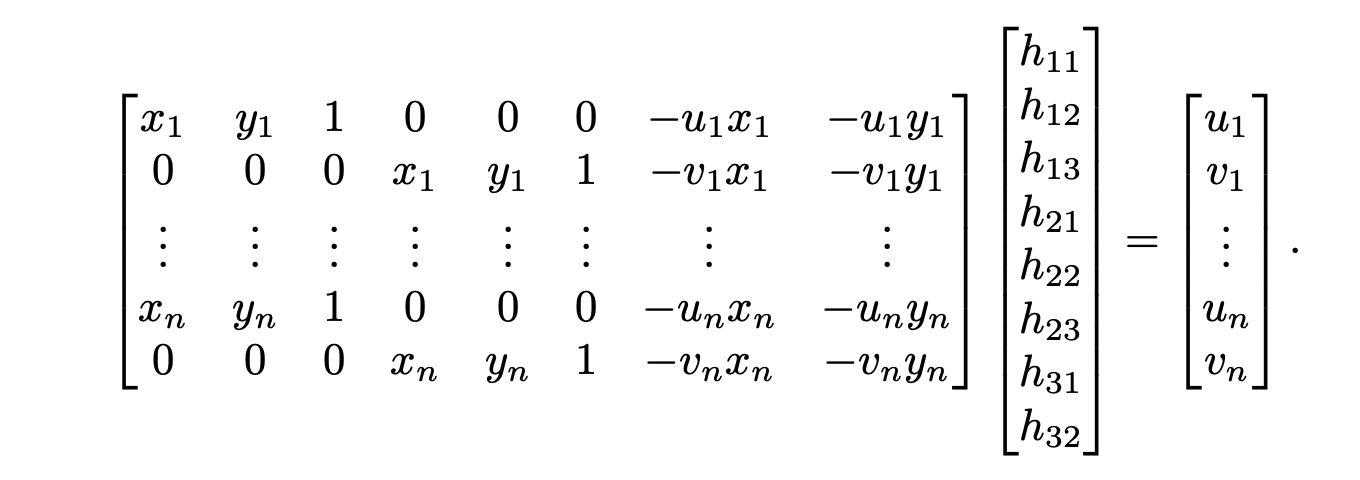

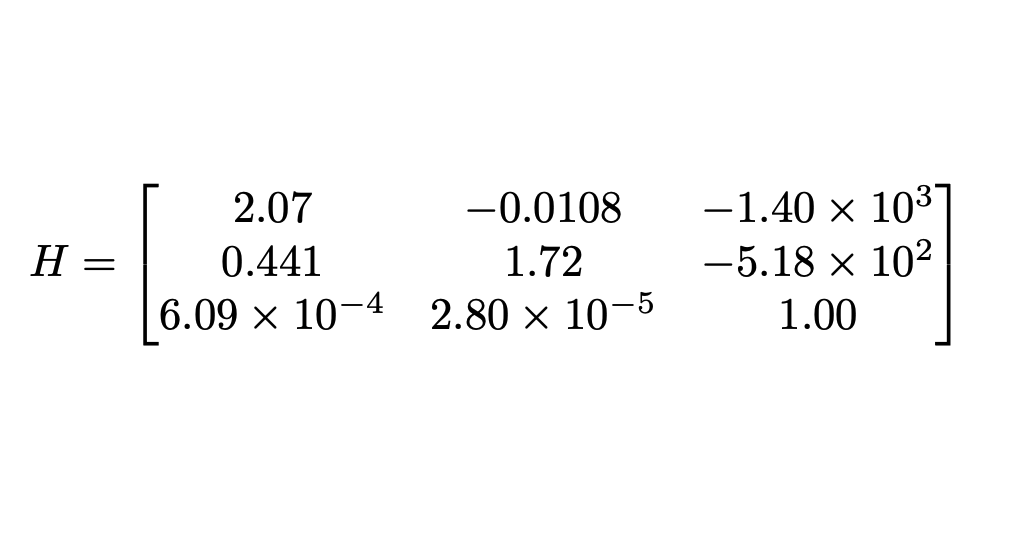

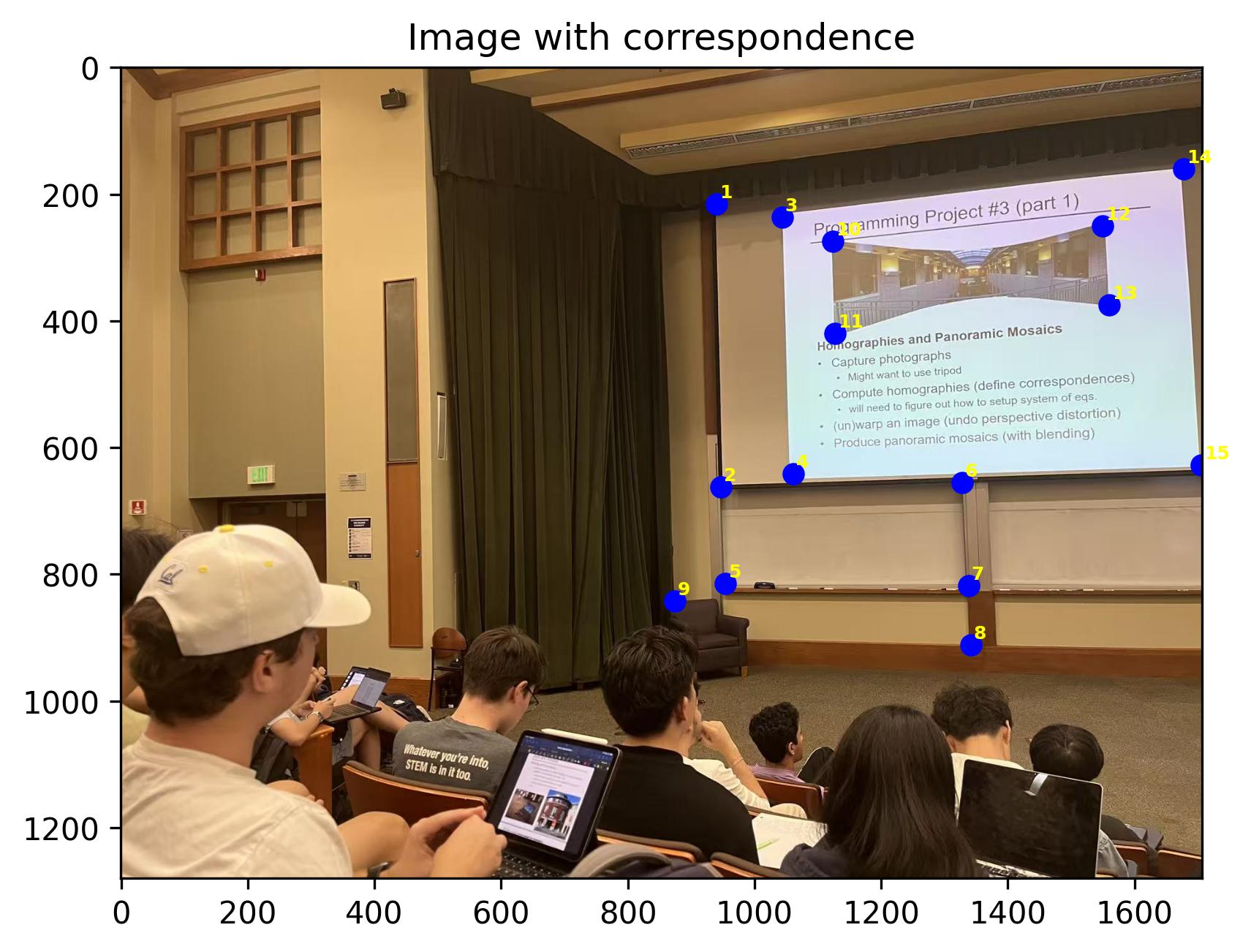

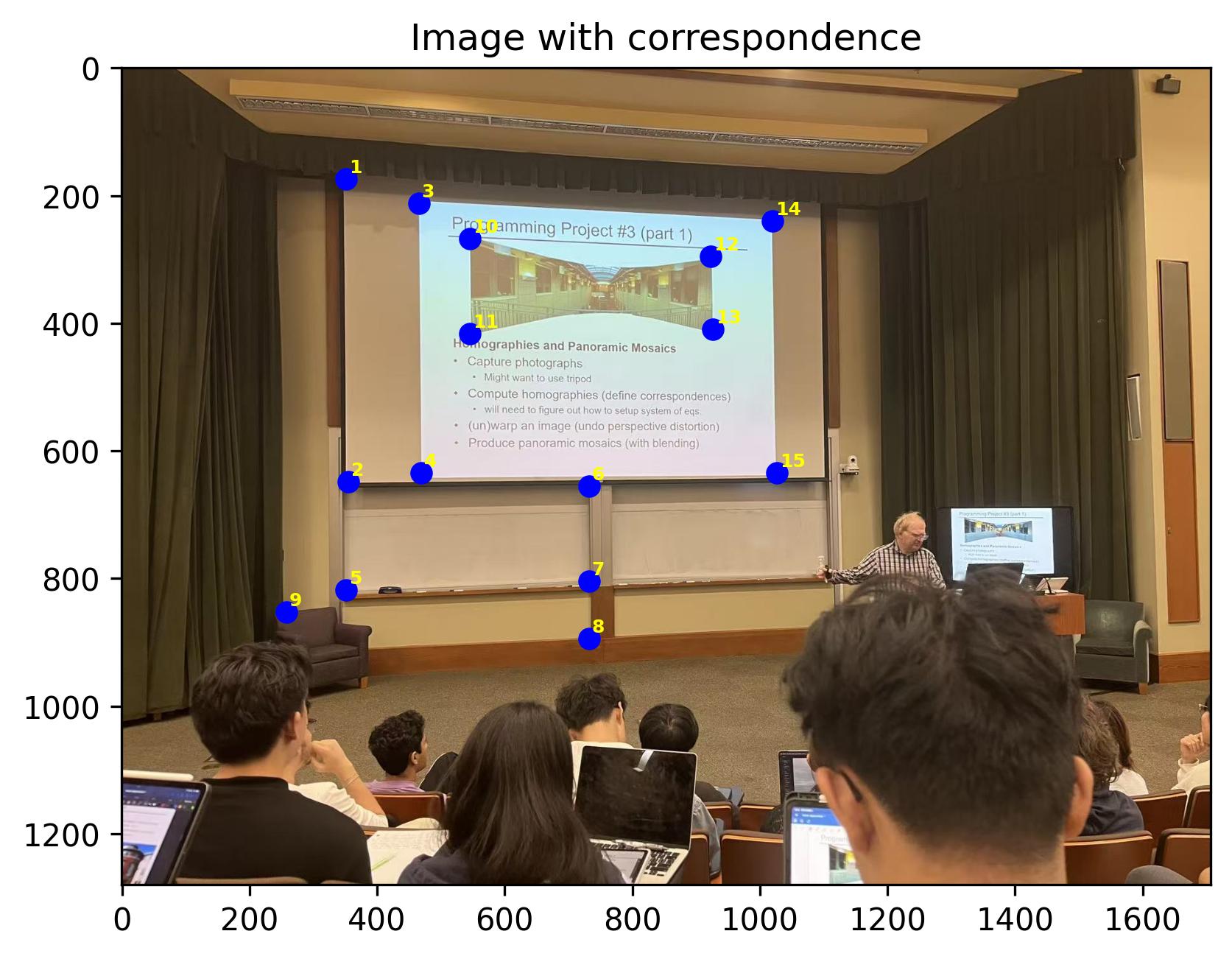

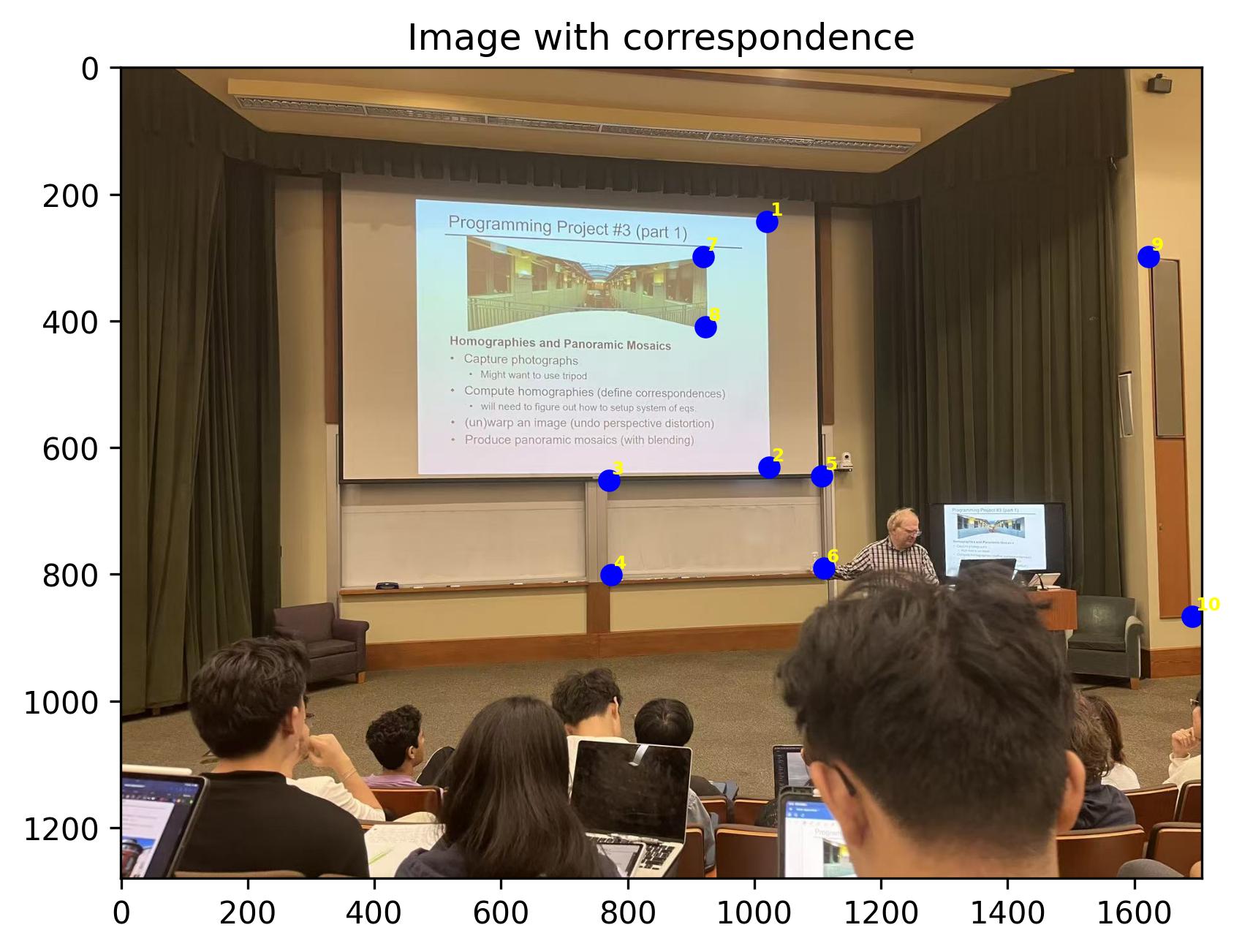

This part introduces how we can recover the homography matrix with a set of correspondence points. Note that the homography matrix has 8 degrees of freedom, therefore we need at least 4 points to recover a homography matrix. More points are needed for a more robust recovery.

Since the 1 on the homography matrix is fixed, we cannot directly solve for the homography matrix. Therefore, we need to expand the system of equations and use least squares to solve for the homography matrix.

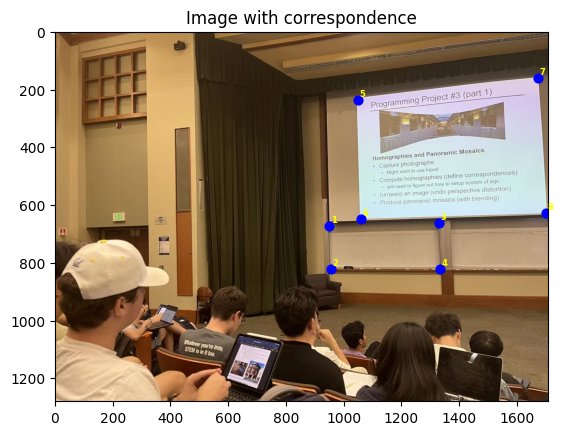

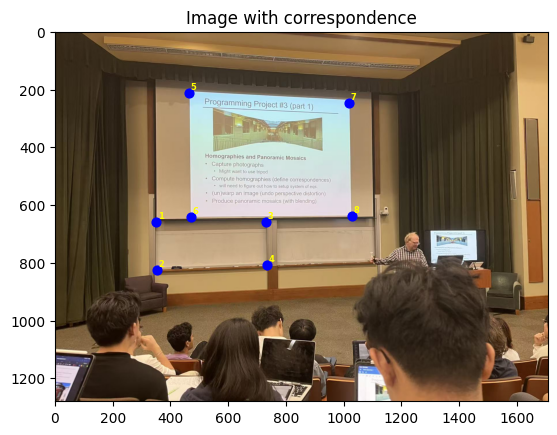

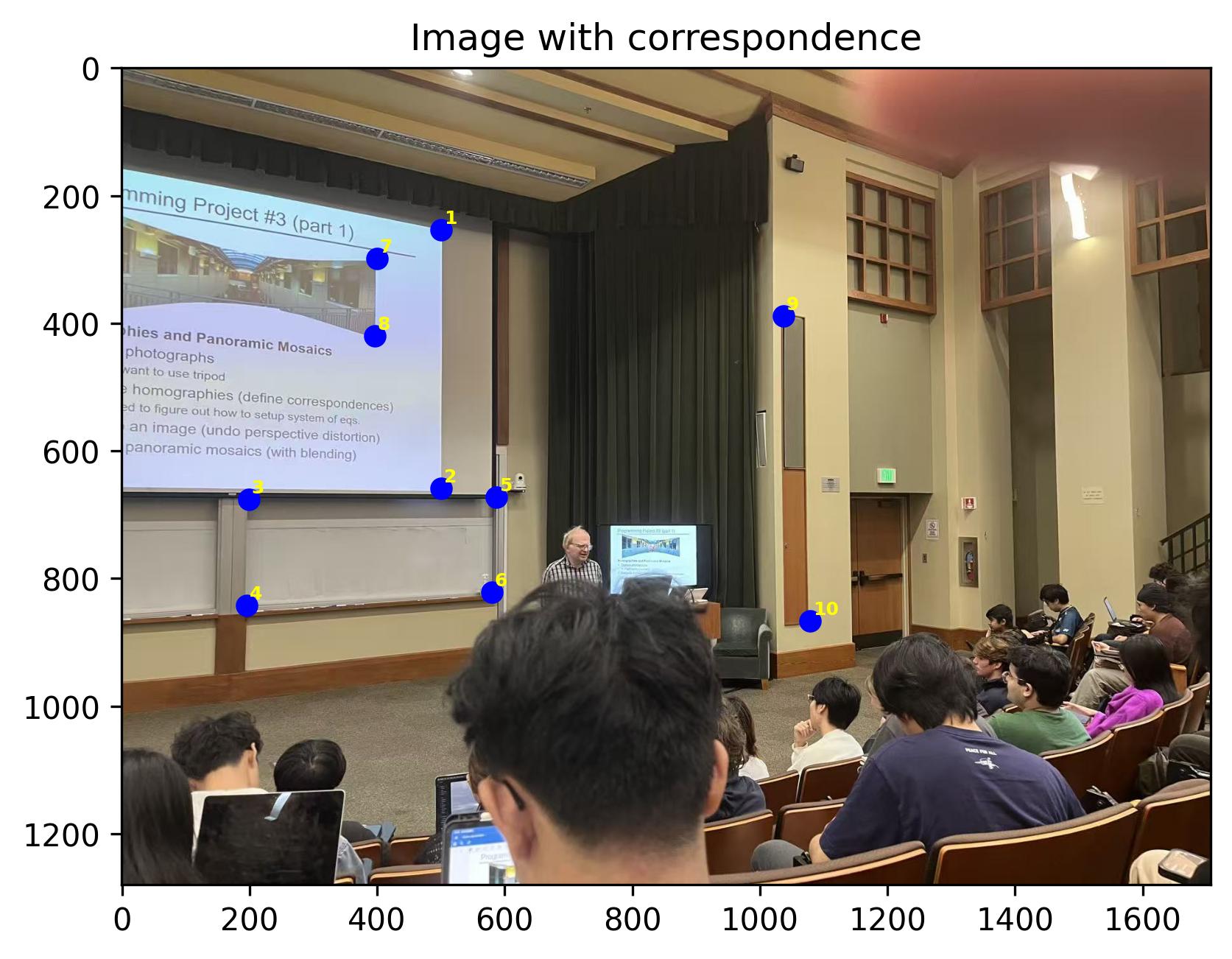

An example of manually picked correspondences and the recoevred homography matrix are shown below.

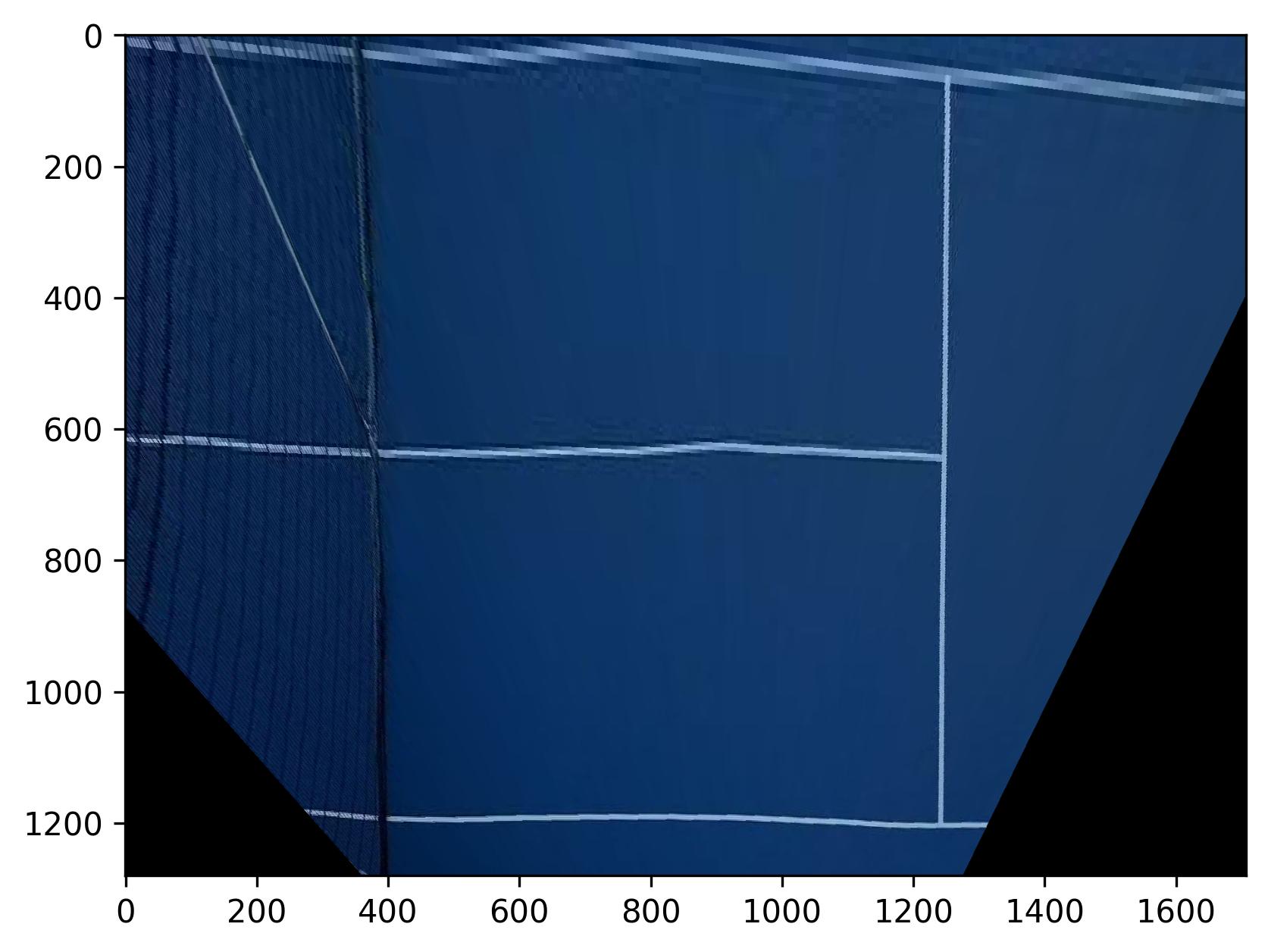

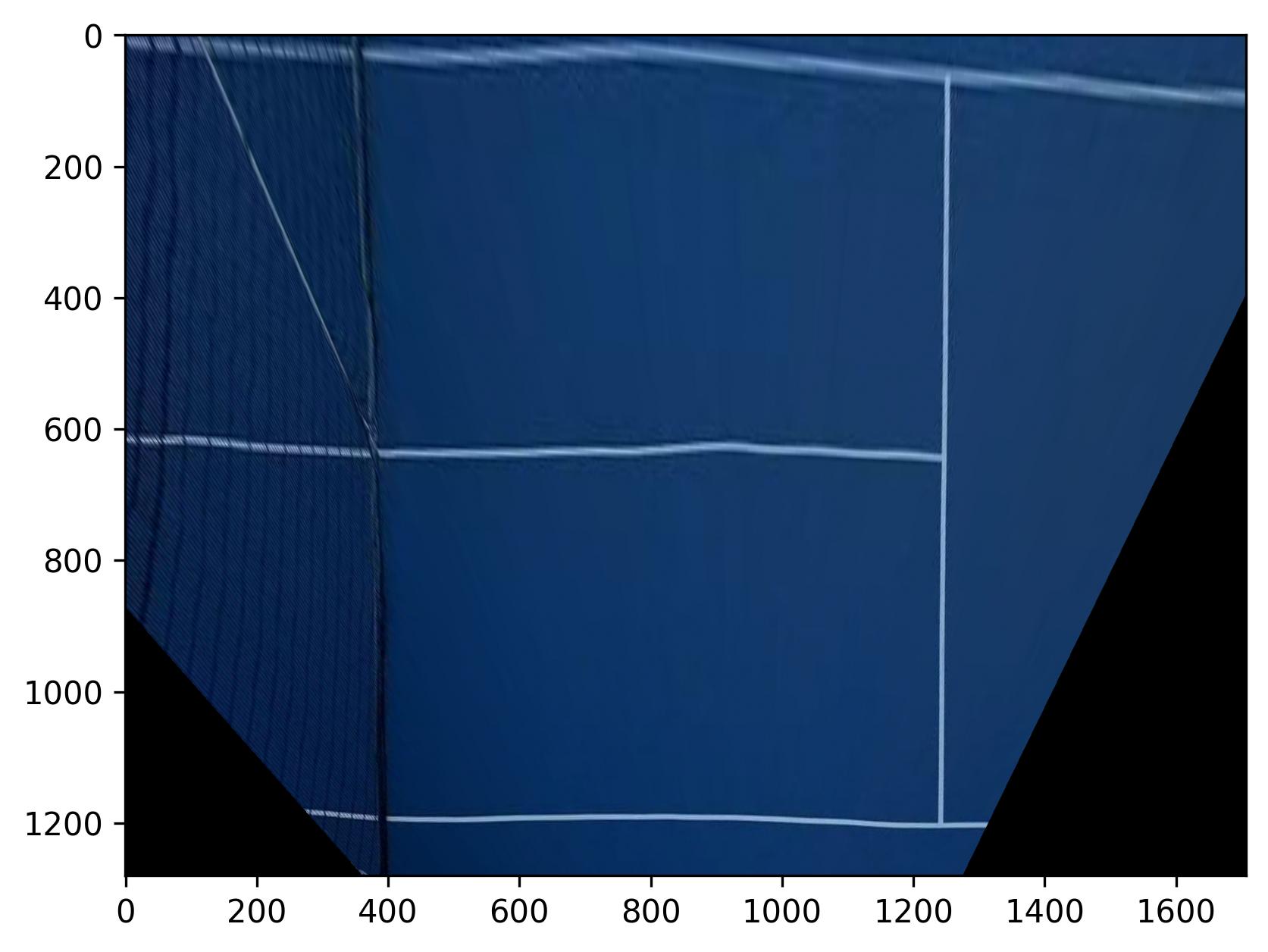

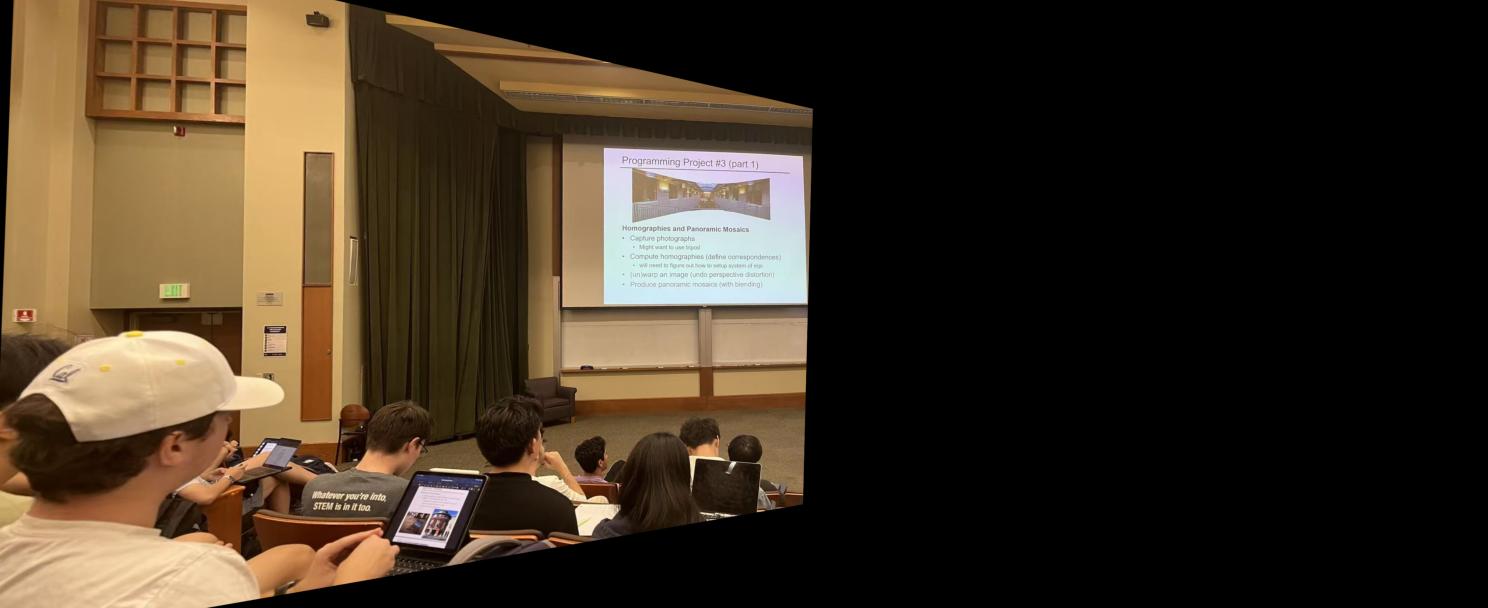

This part introduces two methods to warp the images after recovering the homography matrix: nearest neighbor and bilinear interpolation. The nearest neighbor method is the simplest method to warp the images, where one only needs to find the pixel values of the nearest pixel in the original image (pixels are got from inverse mapping); he bilinear interpolation method finds the pixel values of the four nearest pixels in the original image and uses a weighted average to find the pixel values of the warped image. Here are some examples of the warped images (rectification) with both methods.

What? Lines in a tennis court are not straight? That is probably because of the distortion with the camera in the original image.

Note that the nearest neighbor warping results in more artifacts than the bilinear interpolation method, an example can be found at the top of the warped channing court image.

This part of the project blends the images into a mosaic. The procedure is simple:

Below are some examples of the stitched images. The first set of images will be used as an example to demonstrate the procedure, and the intermediate results for the other two sets of images will not be shown here for brevity.

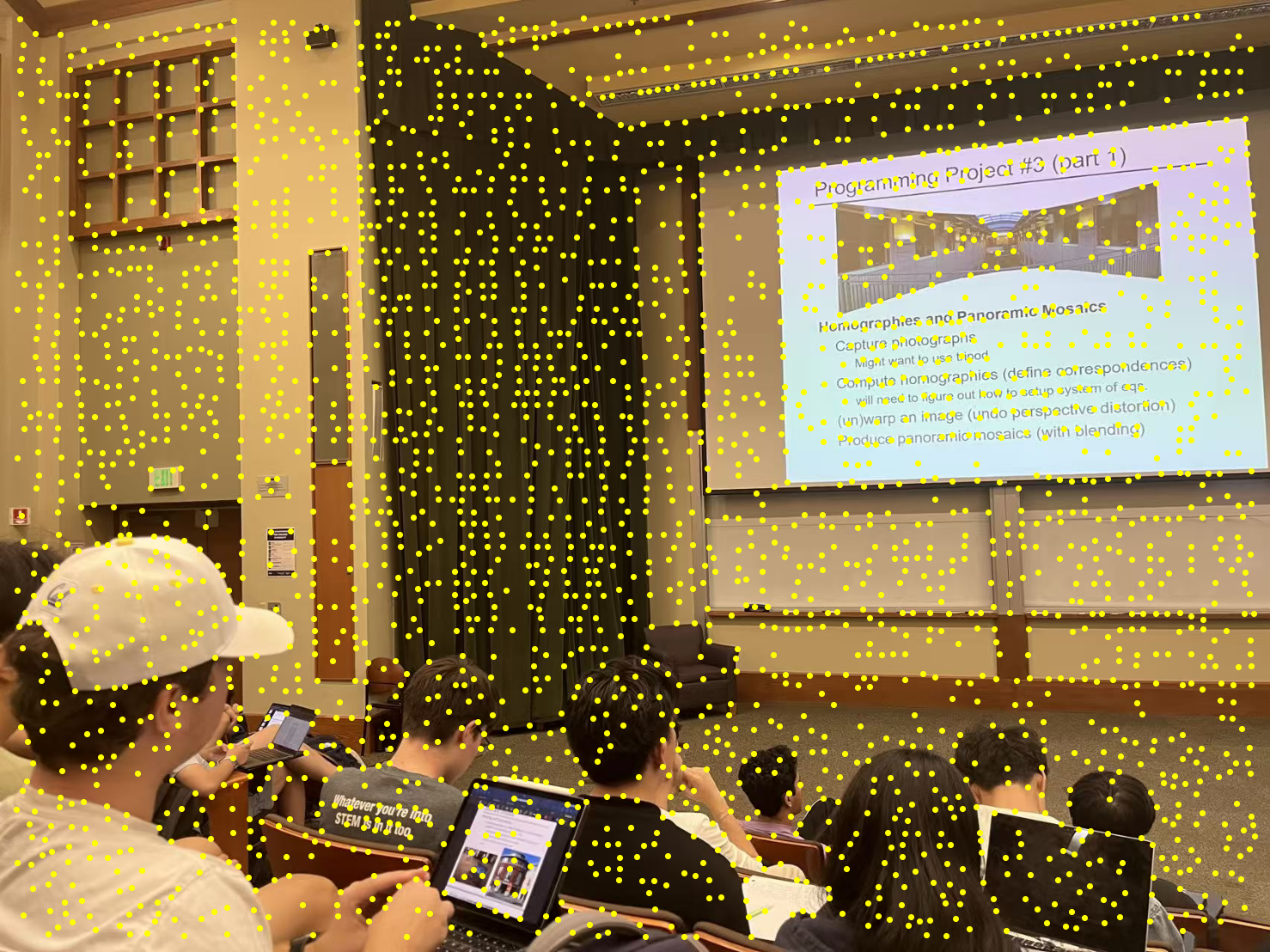

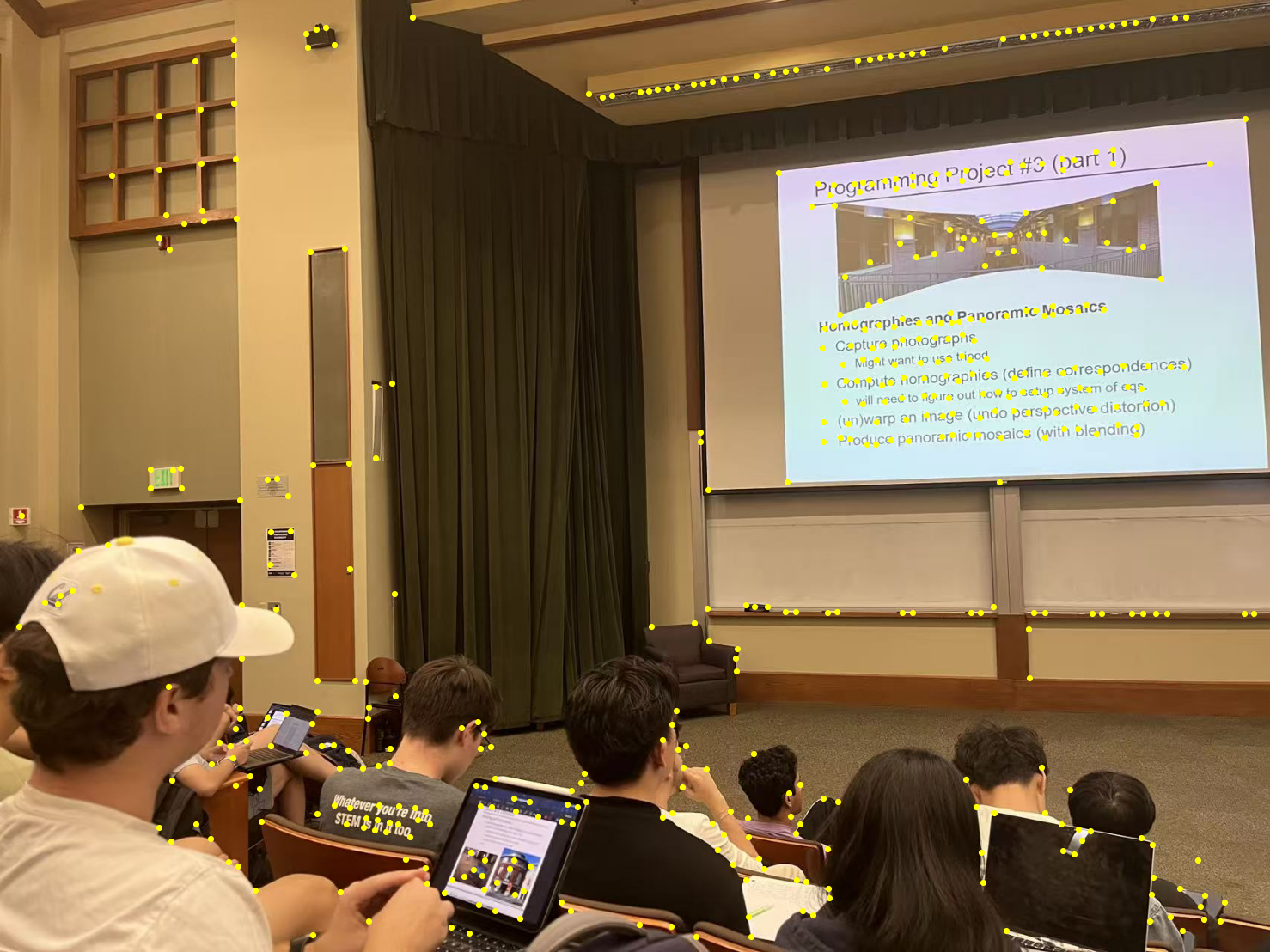

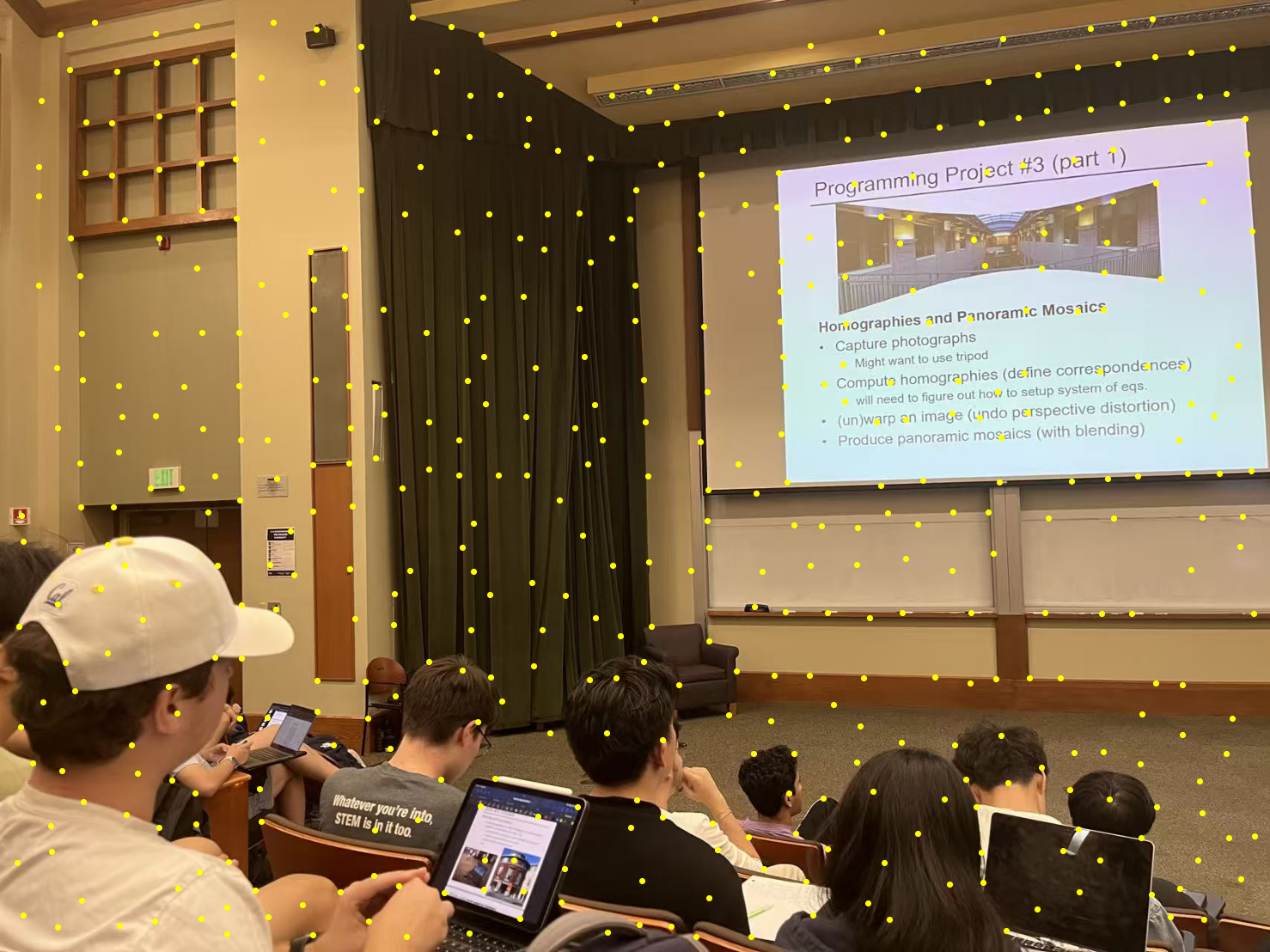

In this section, I use the Harris corner detection algorithm to detect the corners of the images. However, naively choosing the strongest corners will lead to a uneven distribution of the features. Therefore, I applied Adaptive Non-Maximal Suppression (ANMS) to choose the corners. The following images are the results of the Harris corner detection and the Adaptive Non-Maximal Suppression.

ANMS is implemented by the following steps:

This part of the project implements the feature descriptor extraction. The feature descriptor is a 8 by 8 square patch, downsampled from a 40 by 40 patch on the original scale. All the descriptors here are axis-aligned since no rotation is involved in taking the pictures. The following images are some examples of the feature descriptors from the left lecture image.

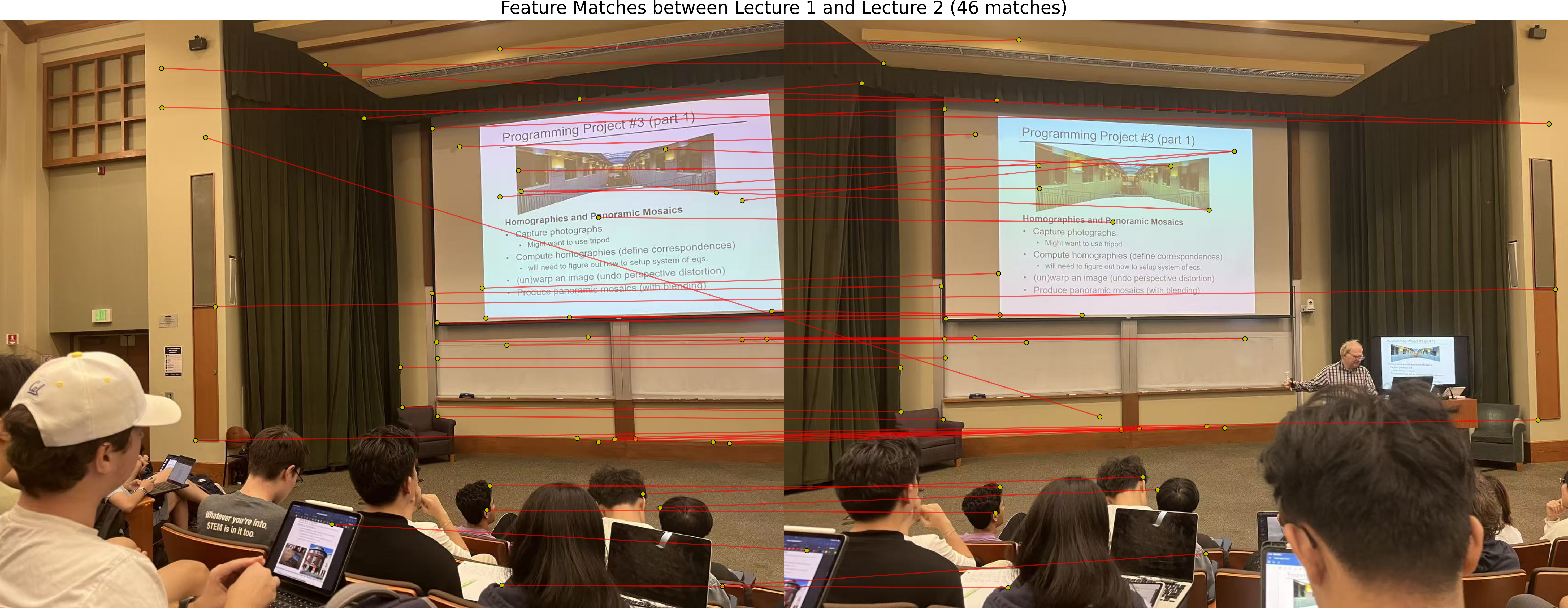

This part of the project implements the feature matching process. The feature matching is done by iterating through all the feature descriptors; for each descriptor, track the ratio between the errors of the best and the second best match. If the ratio is less than 0.7, then we consider it as a good match.

This part of the project implements the RANSAC algorithm to robustly estimate the homography matrix. The RANSAC algorithm is implemented by the following steps:

The following images are some examples of the auto-stitched images and a comparison between the stitching with manually picked correspondences.